Timeline of Future of Life Institute

This is a timeline of Future of Life Institute (CSET).

Contents

Sample questions

This section provides some sample questions for readers who may not have a clear goal when browsing the timeline. It serves as a guide to help readers approach the page with more purpose and understand the timeline’s significance.

Here are some interesting questions this timeline can answer:

- What are FLI's main initiatives and projects since its inception?

- Which key reports and publications have been released by FLI that shape policy discussions on emerging technologies?

- How has FLI contributed to policy recommendations related to AI, biotechnology, and nuclear weapons?

- What collaborations and partnerships has FLI formed to advance its mission?

For more information on evaluating the timeline's coverage, see Representativeness of events in timelines.

Big picture

| Time period | Development summary | More details |

|---|---|---|

| 2014–2020 | Founding and establishment of AI safety as a priority | The Future of Life Institute (FLI) is established in 2014 with a focus on steering transformative technologies away from existential risks. During this period, FLI launches its first grants program, allocating $7 million to AI safety research (2015), organizes the Puerto Rico AI Safety Conference (2015), sponsors the Asilomar Conference on Beneficial AI (2017), and releases the influential "Slaughterbots" video (2017). These initiatives foster collaboration between researchers and policymakers and set the stage for global discussions on AI ethics and safety.[1][2] |

| 2020–2024 | Global advocacy and advanced AI concerns | Building on its early work, FLI expands its efforts to influence global AI policy. In 2020, it releases the "AI Policy: Global Perspectives" report and begins advocating for robust governance frameworks. In 2023, FLI publishes the open letter "Pause Giant AI Experiments," calling for a six-month halt on training advanced AI systems. The organization participates in global summits, such as the AI Safety Summit (2023), and launches initiatives like the AI Safety Grants Program (2024), solidifying its role as a leader in addressing AI safety and governance.[3][4] |

Full timeline

Here are the inclusion criteria for various event types:

- For "Publication", the intention is to include the most notable publications. This usually means that if a publication has been featured by FHI itself or has been discussed by some outside sources, it is included. There are too many publications to include all of them.

- For "Website", the intention is to include all websites associated with FHI. There are not that many such websites, so this is doable.

- For "Staff", the intention is to include all Research Fellows and leadership positions (so far, Nick Bostrom has been the only director so not much to record here).

- For "Workshop" and "Conference", the intention is to include all events organized or hosted by FHI, but not events where FHI staff only attended or only helped with organizing.

- For "Internal review", the intention is to include all annual review documents.

- For "External review", the intention is to include all reviews that seem substantive (judged by intuition). For mainstream media articles, only ones that treat FHI/Bostrom at length are included.

- For "Financial", the intention is to include all substantial (say, over $10,000) donations, including aggregated donations and donations of unknown amounts.

- For "Nick Bostrom", the intention is to include events sufficient to give a rough overview of Bostrom's development prior to the founding of FHI.

- For "Social media", the intention is to include all social media account creations (where the date is known) and Reddit AMAs.

- Events about FHI staff giving policy advice (to e.g. government bodies) are not included, as there are many such events and it is difficult to tell which ones are more important.

- For "Project Announcement" or "Intiatives", the intention is to include announcements of major initiatives and research programs launched by FHI, especially those aimed at training researchers or advancing existential risk mitigation.

- For "Collaboration", the intention is to include significant collaborations with other institutions where FHI co-authored reports, conducted joint research, or played a major role in advising.

| Year | Month and date | Event type | Details |

|---|---|---|---|

| 2014 | March | Founding | The Future of Life Institute (FLI) is established by Max Tegmark, Jaan Tallinn, Anthony Aguirre, Viktoriya Krakovna, and Meia Chita-Tegmark. The organization aims to steer transformative technologies toward benefiting life and away from large-scale risks, with a focus on existential risks from advanced artificial intelligence (AI).[5] |

| 2014 | May 24 | Launch Event | FLI hosts its inaugural event at MIT, titled "The Future of Technology: Benefits and Risks," featuring a panel discussion moderated by Alan Alda, with panelists including George Church, Frank Wilczek, and Jaan Tallinn.[6] |

| 2015 | January 2}-5} | Conference | FLI organizes its first conference, "The Future of AI: Opportunities and Challenges," in San Juan, Puerto Rico, bringing together leading AI researchers and experts to discuss the future of artificial intelligence.[7] |

| 2015 | January 22 | Grants Program | FLI releases an international Request for Proposals (RFP) to fund research aimed at ensuring AI remains robust and beneficial. The initiative seeks to address potential risks associated with AI development.[8] |

| 2015 | July 1 | Grants Awarded | FLI announces grant recommendations, allocating $7 million to 37 research projects focused on AI safety and ethics.[9] |

| 2015 | September 1 | Policy Exchange | FLI co-organizes a policy exchange event with the Centre for the Study of Existential Risk (CSER) at Harvard University, discussing the implications of machines surpassing human intelligence.[10] |

| 2015 | December 10 | Symposium | FLI co-sponsors the NIPS Symposium on the Societal Impacts of Machine Learning: Algorithms Among Us, held at the Montreal Convention Center, Quebec, Canada.[11] |

| 2014 | March | Founding | The Future of Life Institute (FLI) is established by Max Tegmark, Jaan Tallinn, Anthony Aguirre, Viktoriya Krakovna, and Meia Chita-Tegmark. The organization aims to steer transformative technologies toward benefiting life and away from large-scale risks, with a focus on existential risks from advanced artificial intelligence (AI).[12] |

| 2015 | January 22 | Grants Program | FLI releases an international Request for Proposals (RFP) to fund research aimed at ensuring AI remains robust and beneficial. The initiative seeks to address potential risks associated with AI development.[13] |

| 2015 | July 1 | Grants Awarded | FLI announces grant recommendations, allocating $7 million to 37 research projects focused on AI safety and ethics.[14] |

| 2016 | February 13 | Workshop | FLI co-organizes the "AI, Ethics, and Society" workshop in Phoenix, Arizona, bringing together experts to discuss the ethical implications of artificial intelligence development.[15] |

| 2016 | April 2 | Conference | FLI hosts the "Reducing the Dangers of Nuclear War" conference at MIT, focusing on strategies to mitigate the risks associated with nuclear weapons.[16] |

| 2016 | December 9 | Workshop | FLI sponsors the "Interpretable Machine Learning for Complex Systems" workshop at the Neural Information Processing Systems (NIPS) conference, emphasizing the importance of transparency in AI systems.[17] |

| 2017 | January 5}-8} | Conference | FLI sponsors the Asilomar Conference on Beneficial AI, bringing together experts to discuss principles for the safe and beneficial development of AI technologies.[18] |

| 2017 | April 21 | Conference | FLI hosts the "Reducing the Threat of Nuclear War" conference at MIT, addressing the escalating risks of nuclear conflict and exploring disarmament strategies.[19] |

| 2017 | October 27 | Award | FLI presents the inaugural Future of Life Award to Vasili Arkhipov, recognizing his role in preventing a potential nuclear war during the Cuban Missile Crisis.[20] |

| 2017 | November 12 | Video Release | FLI releases the "Slaughterbots" video, a short film depicting the potential dangers of autonomous weapons, to raise public awareness and advocate for international regulation.[21] |

| 2018 | April | Publication | FLI releases a report on the potential risks of lethal autonomous weapons, advocating for international regulations to prevent their misuse.[22] |

| 2018 | August 30 | Policy Advocacy | The California State Legislature unanimously adopts legislation supporting the Future of Life Institute's Asilomar AI Principles, promoting the safe and beneficial development of artificial intelligence.[23] |

| 2018 | September 26 | Award | FLI honors Stanislav Petrov with the Future of Life Award for his role in averting a potential nuclear war in 1983, recognizing his significant contribution to global safety.[24] |

| 2019 | January 2}-7} | Conference | FLI hosts the Beneficial AGI 2019 conference in Puerto Rico, bringing together AI researchers and thought leaders to discuss the future of artificial general intelligence and strategies to ensure its alignment with human values.[25] |

| 2019 | March | Initiative | FLI launches a campaign to raise awareness about the dangers of deepfakes and the need for technological and policy measures to combat them.[26] |

| 2019 | April 9 | Award | FLI presents the Future of Life Award to Dr. Matthew Meselson for his efforts in preventing the use of biological weapons, highlighting his contributions to global security.[27] |

| 2019 | March 28}-31} | Conference | FLI co-hosts the Augmented Intelligence Summit in Scotts Valley, California, focusing on steering the future of AI towards augmenting human intelligence and ensuring its beneficial development.[28] |

| 2020 | March | Initiative | FLI launches a campaign to raise awareness about the dangers of deepfakes and the need for technological and policy measures to combat them.[29] |

| 2020 | August | Publication | FLI releases a comprehensive report titled "AI Policy: Global Perspectives," analyzing international approaches to AI governance and offering recommendations for harmonizing global AI policies.[30] |

| 2020 | October | Event | FLI hosts the "Global AI Safety Summit," bringing together experts from academia, industry, and government to discuss strategies for ensuring the safe development and deployment of AI technologies.[31] |

| 2020 | November 22 | Publication | Max Tegmark, co-founder of FLI, publishes "Life 3.0," a book exploring the future of artificial intelligence and its implications for humanity.[32] |

| 2021 | February | Collaboration | FLI collaborates with leading AI research institutions to develop the "AI Safety Guidelines," a set of best practices aimed at mitigating risks associated with AI development. These guidelines focus on transparency, accountability, and ethical considerations in AI research and deployment. [33] |

| 2021 | June | Policy Advocacy | FLI submits a policy brief to the United Nations, advocating for the establishment of an international regulatory framework for autonomous weapons systems. The brief warns about the dangers of lethal autonomous weapons and calls for a ban on their development to prevent an arms race and ensure global security. [34] |

| 2021 | August | Policy Advocacy | FLI actively participates in discussions surrounding the European Union's Artificial Intelligence Act, advocating for robust safety measures and ethical guidelines in AI development. FLI emphasizes the importance of balancing innovation with safeguards to address potential risks. [35] |

| 2021 | September | Research Grant | FLI awards $5 million in grants to support research projects focused on AI alignment and robustness. These grants are designed to address challenges in ensuring that AI systems act in accordance with human values, promoting safety and ethical considerations. [36] |

| 2022 | January | Publication | FLI releases the "State of AI Safety 2022" report, providing an in-depth overview of challenges and advancements in AI safety research. The report highlights key areas like robustness, interpretability, and alignment, offering actionable recommendations for researchers and policymakers to mitigate risks. [37] |

| 2022 | April | Initiative | FLI launches the "AI Ethics in Education" program, creating resources and curricula to teach students about the ethical implications of AI technologies. The program aims to foster awareness among the next generation of leaders about the societal impacts of AI development and usage. [38] |

| 2022 | May | Open Letter | FLI publishes an open letter urging a global moratorium on the development and deployment of lethal autonomous weapons. The letter garners widespread support from policymakers, researchers, and civil society groups, highlighting the need for international regulations to prevent misuse. [39] |

| 2022 | July | Conference | FLI organizes the "International Conference on AI and Human Values," bringing together academics, policymakers, and technologists to explore how AI development can align with societal and ethical values. The conference emphasizes interdisciplinary collaboration to address the ethical and social challenges posed by AI. [40] |

| 2022 | October | Partnership | FLI partners with leading global tech companies to establish the "AI Safety Consortium," aimed at fostering collaboration and sharing best practices for safe AI development. The consortium seeks to create unified safety standards and address risks associated with advanced AI technologies. [41] |

| 2023 | March 29 | Open Letter | FLI publishes an open letter titled "Pause Giant AI Experiments," urging AI labs to pause for at least six months the training of AI systems more powerful than GPT-4. The letter highlights concerns about societal risks, including misinformation, job automation, and the potential loss of control over advanced AI systems. It calls for the creation of shared safety protocols for AI design and development, overseen by independent experts, to ensure AI systems are safe and beneficial. [42] |

| 2023 | September 22 | Policy Advocacy | FLI marks the expiration of the six-month pause proposed in their "Pause Giant AI Experiments" letter, reiterating the need for AI regulations to address risks posed by advanced AI systems. The institute advocates for the establishment of regulatory authorities, oversight of highly capable AI systems, and public funding for technical AI safety research. It also emphasizes the creation of auditing mechanisms and institutions to manage the economic and political disruptions associated with AI advancements. [43] |

| 2023 | October 30 | Policy Engagement | FLI provides formal input to U.S. federal agencies following the release of the White House's Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, offering technical and policy expertise on AI governance. :contentReference[oaicite:2]{index=2} |

| 2024 | February 14 | Funding | FLI announces the "Realizing Aspirational Futures" grant program, offering financial support to research projects that envision and work towards positive long-term outcomes in artificial intelligence development. This initiative aims to encourage innovative approaches that align AI advancements with beneficial societal impacts. [44] |

| 2024 | March 22 | Publication | On the one-year anniversary of their "Pause Giant AI Experiments" open letter, FLI assesses the global response and ongoing relevance of their call for a temporary halt on training AI systems more powerful than GPT-4. The reflection highlights the discourse generated among policymakers, researchers, and the public regarding AI safety and ethical considerations. [45] |

| 2024 | Error in Template:Dts: 'May 21–22' is an invalid date | Summit Participation | FLI participates in the second AI Safety Summit in Seoul, South Korea, co-hosted by the UK and South Korea. At the summit, FLI presents a policy statement advocating for the appointment of a global AI safety coordinator to unify international efforts, the establishment of transparent governance frameworks to ensure accountability and ethical AI development, and the creation of shared safety standards to address risks associated with advanced AI technologies. Additionally, FLI emphasizes the importance of aligning AI advancements with human values and societal well-being. These contributions significantly influence the adoption of the "Seoul Declaration for Safe, Innovative, and Inclusive AI," a commitment by nations to collaborate on AI safety governance. [46] |

| 2024 | November | Collaboration | FLI partners with international organizations to develop comprehensive guidelines for the ethical use of AI in military applications. This collaboration focuses on preventing the proliferation of lethal autonomous weapons by advocating for strict regulations and ensuring meaningful human oversight in military AI operations. It emphasizes the importance of aligning AI deployment with international humanitarian laws and ethical standards, fostering transparency and trust among global stakeholders. By promoting these key principles, FLI aims to mitigate risks associated with AI in conflict scenarios and establish robust governance frameworks for military AI systems. [47] |

Numerical and visual data

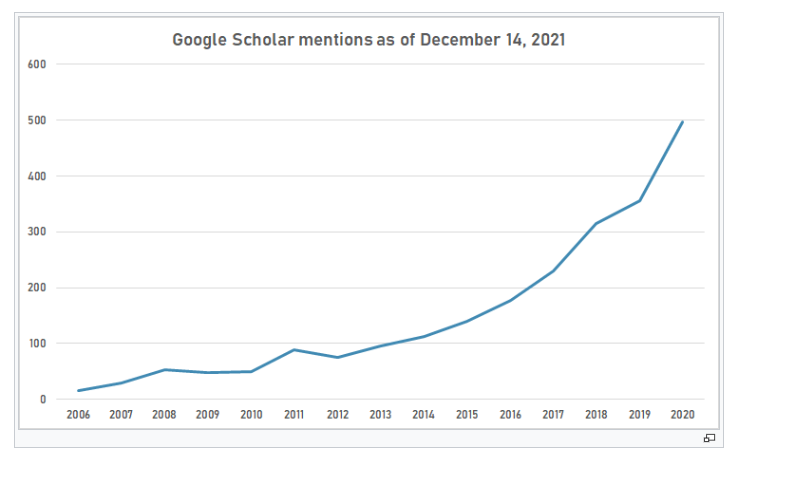

Google Scholar

The following table summarizes per-year mentions on Google Scholar as of November 16, 2024.

| Year | "Future of Life Institute" |

|---|---|

| 2015 | 111 |

| 2016 | 196 |

| 2017 | 286 |

| 2018 | 446 |

| 2019 | 539 |

| 2020 | 587 |

| 2021 | 521 |

| 2022 | 511 |

| 2023 | 1150 |

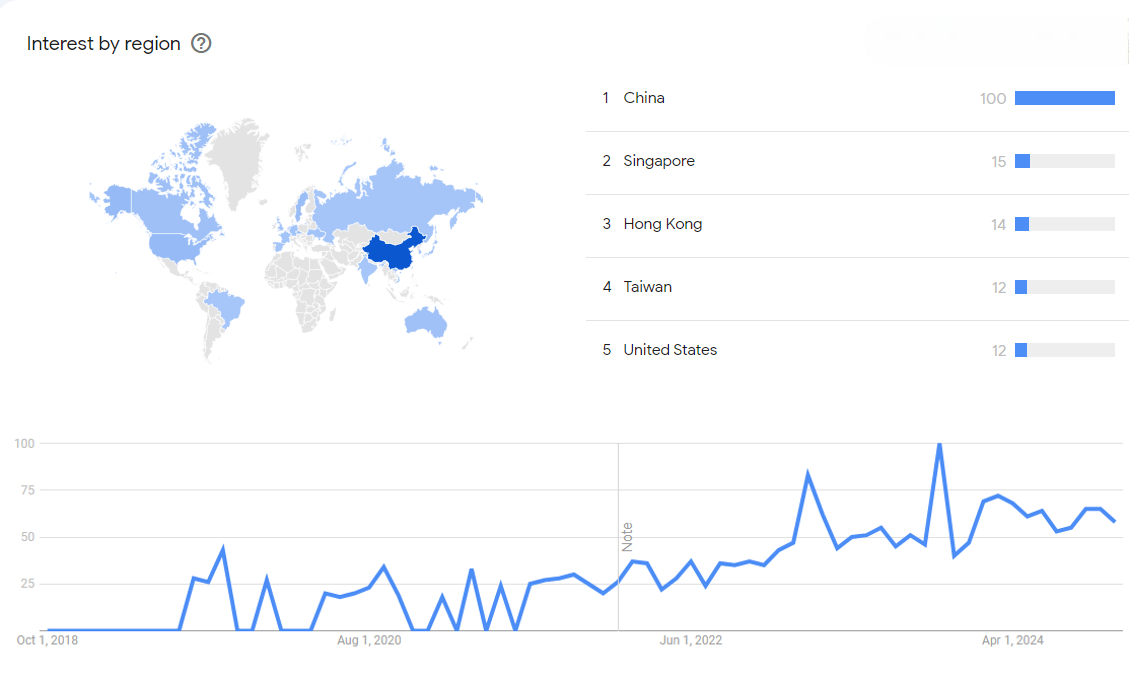

Google Trends

The image below shows Google Trends data for Center for Security and Emerging Technology (Research institute), from January 2015 to November 2024, when the screenshot was taken. Interest is also ranked by country and displayed on world map.[48]

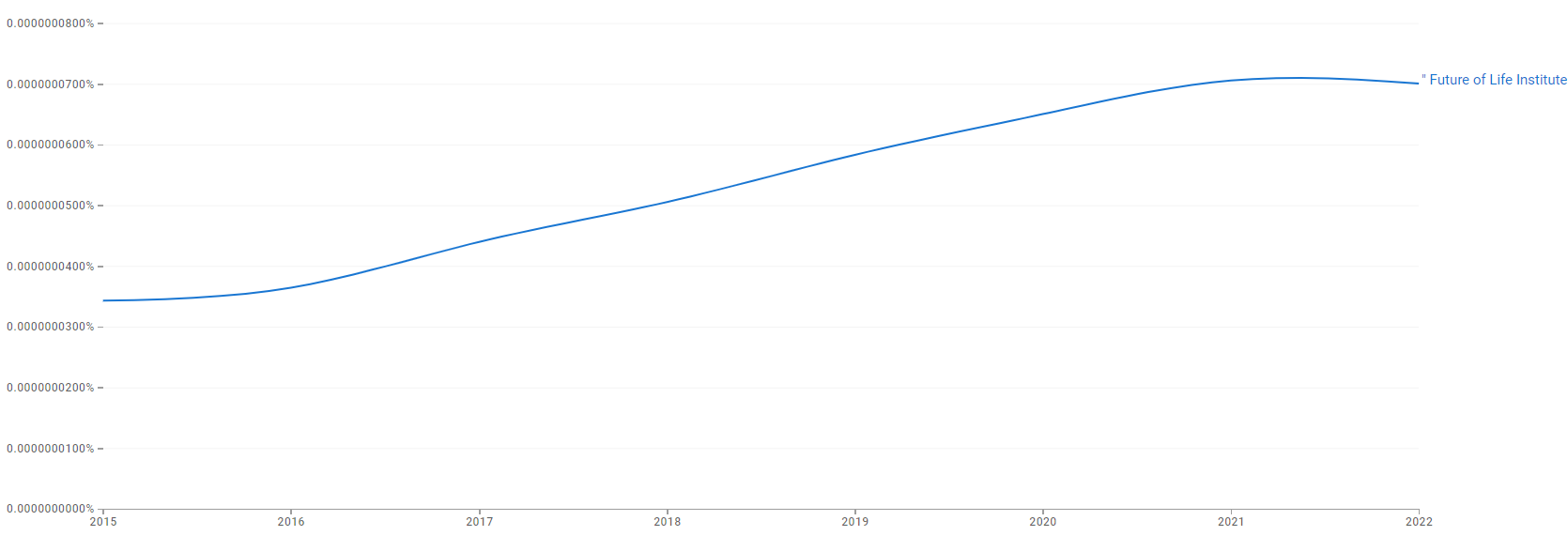

Google Ngram Viewer

The chart below shows Google Ngram Viewer data for Center for Security and Emerging Technology, from 2015 to 2022.[49]

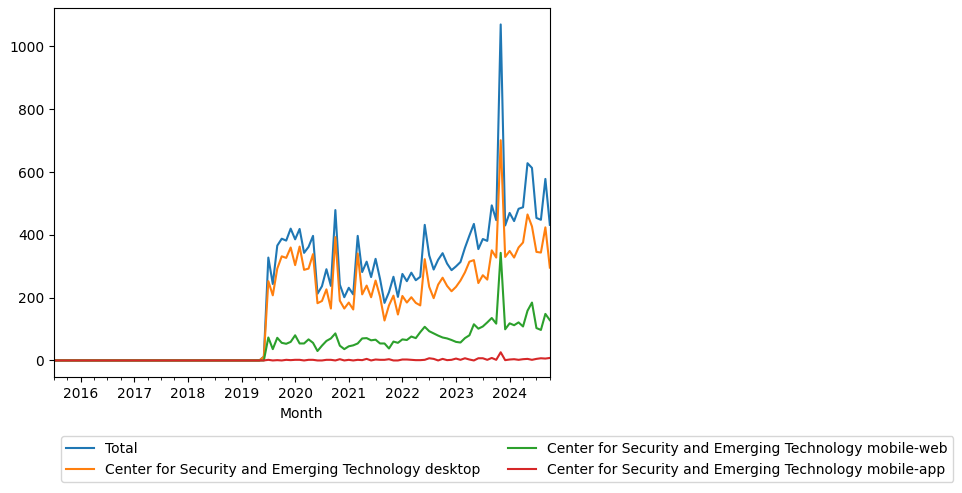

Wikipedia pageviews for CSET page

The following plots pageviews for the Center for Security and Emerging Technolo Wikipedia page. The image generated on [1].

External links

References

- ↑ "Asilomar AI Principles". Retrieved November 16, 2024.

- ↑ "Slaughterbots Video". Retrieved November 16, 2024.

- ↑ "Pause Giant AI Experiments". Retrieved November 16, 2024.

- ↑ "AI Safety Grants Program". Retrieved November 16, 2024.

- ↑ "Future of Life Institute Wikipedia". Retrieved November 16, 2024.

- ↑ "The Future of Technology: Benefits and Risks". Retrieved November 16, 2024.

- ↑ "AI Safety Conference in Puerto Rico". Retrieved November 16, 2024.

- ↑ "FLI Grants". Retrieved November 16, 2024.

- ↑ "FLI Grants Program Results". Retrieved November 16, 2024.

- ↑ "Policy Exchange: Co-organized with CSER". Retrieved November 16, 2024.

- ↑ "NIPS Symposium 2015". Retrieved November 16, 2024.

- ↑ "Future of Life Institute Wikipedia". Retrieved November 16, 2024.

- ↑ "FLI Grants". Retrieved November 16, 2024.

- ↑ "FLI Grants Program Results". Retrieved November 16, 2024.

- ↑ "AI, Ethics, and Society Workshop". Retrieved November 16, 2024.

- ↑ "Reducing the Dangers of Nuclear War Conference". Retrieved November 16, 2024.

- ↑ "FLI AI Activities". Retrieved November 16, 2024.

- ↑ "Beneficial AI 2017 Conference". Retrieved November 16, 2024.

- ↑ "Reducing the Threat of Nuclear War 2017 Conference". Retrieved November 16, 2024.

- ↑ "Vasili Arkhipov Receives Inaugural Future of Life Award". Retrieved November 16, 2024.

- ↑ "Slaughterbots Video". Retrieved November 16, 2024.

- ↑ "Lethal Autonomous Weapons Report". Retrieved November 16, 2024.

- ↑ "FLI 2018 Annual Report" (PDF). Retrieved November 16, 2024.

- ↑ "FLI 2018 Annual Report" (PDF). Retrieved November 16, 2024.

- ↑ "Beneficial AGI 2019 Conference". Retrieved November 16, 2024.

- ↑ "Deepfake Awareness Campaign". Retrieved November 16, 2024.

- ↑ "Dr. Matthew Meselson Wins 2019 Future of Life Award". Retrieved November 16, 2024.

- ↑ "FLI Events Work". Retrieved November 16, 2024.

- ↑ "Deepfake Awareness Campaign". Retrieved November 16, 2024.

- ↑ "AI Policy: Global Perspectives". Retrieved November 16, 2024.

- ↑ "Global AI Safety Summit". Retrieved November 16, 2024.

- ↑ "Life 3.0 by Max Tegmark". Retrieved November 16, 2024.

- ↑ "AI Safety Guidelines". Retrieved November 16, 2024.

- ↑ "UN Policy Brief on Autonomous Weapons". Retrieved November 16, 2024.

- ↑ "FLI on AI Regulation". Retrieved November 16, 2024.

- ↑ "AI Alignment Grants 2021". Retrieved November 16, 2024.

- ↑ "State of AI Safety 2022". Retrieved November 16, 2024.

- ↑ "AI Ethics in Education Program". Retrieved November 16, 2024.

- ↑ "Open Letter on Autonomous Weapons". Retrieved November 16, 2024.

- ↑ "International Conference on AI and Human Values". Retrieved November 16, 2024.

- ↑ "AI Safety Consortium". Retrieved November 16, 2024.

- ↑ "Pause Giant AI Experiments Open Letter". Retrieved November 16, 2024.

- ↑ "Six-Month Letter Expires: The Need for AI Regulation". Retrieved November 16, 2024.

- ↑ "Realizing Aspirational Futures: New FLI Grants Opportunities". Retrieved November 16, 2024.

- ↑ "The Pause Letter: One Year Later". Retrieved November 16, 2024.

- ↑ "Seoul AI Safety Summit Statement". Retrieved November 16, 2024.

- ↑ "AI Military Ethics Partnership". Retrieved November 16, 2024.

- ↑ "Center for Security and Emerging Technology (". Google Trends. Retrieved 10 November 2024.

- ↑ "Future of Life Institute". books.google.com. Retrieved 10 November 2024.