Difference between revisions of "Timeline of Center for Human-Compatible AI"

(→Wikipedia pageviews for Center for Human-Compatible AI page) |

(→Full timeline) |

||

| (14 intermediate revisions by the same user not shown) | |||

| Line 31: | Line 31: | ||

! Year !! Month and date !! Event type !! Details | ! Year !! Month and date !! Event type !! Details | ||

|- | |- | ||

| − | | 2016 || {{dts|August}} || | + | | 2016 || {{dts|August}} || Founding Vision || Stuart Russell, renowned AI researcher and co-author of Artificial Intelligence: A Modern Approach, establishes CHAI at UC Berkeley. Russell emphasizes the need to shift the field toward developing AI systems that are "provably beneficial" and aligned with human values.<ref>{{cite web |url=https://news.berkeley.edu/2016/08/29/center-for-human-compatible-artificial-intelligence/ |title=UC Berkeley launches Center for Human-Compatible Artificial Intelligence |date=August 29, 2016 |publisher=Berkeley News |accessdate=December 15, 2024}}</ref> |

|- | |- | ||

| − | | 2016 || {{dts|August}} || | + | | 2016 || {{dts|August}} || Founding Members || CHAI's founding team includes Stuart Russell, Andrew Critch (co-founder of CFAR), and Anca Dragan (expert in human-robot interaction). Their expertise lays the groundwork for interdisciplinary research in AI alignment and long-term safety.<ref>{{cite web |url=https://intelligence.org/2018/03/25/march-2018-newsletter/ |title=March 2018 Newsletter - Machine Intelligence Research Institute |publisher=Machine Intelligence Research Institute |date=March 25, 2018 |accessdate=December 15, 2024}}</ref> |

|- | |- | ||

| − | | 2016 || {{dts| | + | | 2016 || {{dts|August}} || Website || CHAI launches its official website to serve as a hub for resources, updates, and publications related to AI safety and alignment. The site includes research priorities, key collaborators, and recommended reading for the AI alignment community.<ref>{{cite web |url=http://humancompatible.ai |title=Center for Human-Compatible AI Official Website |accessdate=December 15, 2024}}</ref> |

|- | |- | ||

| − | | 2016 || {{dts| | + | | 2016 || {{dts|September}} || Financial || CHAI secures a $5.6 million grant from the Open Philanthropy Project. This significant funding enables early research projects, the recruitment of staff, and operational infrastructure.<ref>{{cite web |url=https://donations.vipulnaik.com/donor.php?donor=Open+Philanthropy+Project&cause_area_filter=AI+risk |title=Open Philanthropy Project Awards Grant to CHAI |accessdate=December 15, 2024}}</ref> |

|- | |- | ||

| − | | | + | | 2016 || {{dts|October}} || Collaboration || CHAI partners with the Machine Intelligence Research Institute (MIRI) and the Center for Applied Rationality (CFAR). These collaborations focus on interdisciplinary research to address existential risks and foster AI alignment methodologies.<ref>{{cite web |url=https://intelligence.org/2018/03/25/march-2018-newsletter/ |title=March 2018 Newsletter - Machine Intelligence Research Institute |publisher=Machine Intelligence Research Institute |date=March 25, 2018 |accessdate=December 15, 2024}}</ref> |

|- | |- | ||

| − | | | + | | 2016 || {{dts|November}} || Outreach || Stuart Russell delivers a widely viewed TED Talk on the risks of poorly designed AI objectives, promoting CHAI’s mission to align AI with human values. The talk highlights potential misalignment issues and the necessity for AI safety measures.<ref>{{cite web |url=https://www.ted.com/talks/stuart_russell_how_ai_might_make_us_better_humans |title=How AI Might Make Us Better Humans |publisher=TED |date=November 2016 |accessdate=December 15, 2024}}</ref> |

|- | |- | ||

| − | | | + | | 2016 || {{dts|November 24}} || Publication || "The Off-Switch Game," co-authored by Dylan Hadfield-Menell, Anca Dragan, Pieter Abbeel, and Stuart Russell, is uploaded to arXiv. This influential paper introduces strategies for designing AI systems that allow safe human intervention, marking a pivotal contribution to AI safety.<ref>{{cite web |url=https://arxiv.org/abs/1611.08219 |title=[1611.08219] The Off-Switch Game |accessdate=December 15, 2024}}</ref> |

| + | |- | ||

| + | | 2016 || {{dts|December}} || Resources || CHAI publishes its annotated bibliography of recommended readings, offering curated resources on AI safety and alignment. This document becomes a cornerstone for guiding new researchers in the field.<ref>{{cite web |url=http://humancompatible.ai/bibliography |title=Center for Human-Compatible AI |accessdate=December 15, 2024}}</ref> | ||

| + | |- | ||

| + | | 2016 || {{dts|December}} || Operational Support || The Berkeley Existential Risk Initiative (BERI) begins providing operational support to CHAI. BERI assists with grant management, workshop logistics, and infrastructure development, enabling researchers to focus on advancing AI safety.<ref>{{cite web |url=https://intelligence.org/2018/03/25/march-2018-newsletter/ |title=March 2018 Newsletter - Machine Intelligence Research Institute |publisher=Machine Intelligence Research Institute |date=March 25, 2018 |accessdate=December 15, 2024}}</ref> | ||

|- | |- | ||

| − | | 2018 || {{dts|February 1}} || Publication || Joseph Halpern publishes | + | | 2017 || {{dts|March}} || Collaboration || The Berkeley Existential Risk Initiative (BERI) formalizes its partnership with CHAI, providing operational support for grant management, workshops, and recruitment. This collaboration enables CHAI to expand its research capacity and host interdisciplinary events focused on AI alignment and safety.<ref>{{cite web |url=https://existence.org/ |title=What We Do - Berkeley Existential Risk Initiative |accessdate=December 15, 2024}}</ref> |

| − | + | |- | |

| + | | 2017 || {{dts|July}} || Funding || Open Philanthropy recommends a grant of $403,890 to BERI to support its collaboration with CHAI at UC Berkeley, helping hire contractors and part-time employees for tasks such as web development and event coordination. | ||

| + | <ref>{{cite web |url=https://www.openphilanthropy.org/grants/berkeley-existential-risk-initiative-core-support-and-chai-collaboration/ |title=Open Philanthropy Grant for CHAI Collaboration |publisher=Open Philanthropy |accessdate=December 17, 2024}}</ref> | ||

| + | |- | ||

| + | | 2017 || {{dts|May}} || Team Expansion || Rosie Campbell joins CHAI as Assistant Director, leveraging her expertise in AI ethics and program management. She plays a critical role in organizing CHAI’s first annual workshop and fostering collaborations between academic and industry partners.<ref>{{cite web |url=https://www.bbc.co.uk/rd/people/rosie-campbell?Type=Posts&Decade=All |title=Rosie Campbell - BBC R&D |accessdate=December 15, 2024}}</ref> | ||

| + | |- | ||

| + | | 2017 || {{dts|May 5}}–6 || Workshop || CHAI hosts its inaugural annual workshop, bringing together researchers and practitioners to discuss challenges in AI alignment. The event focuses on reorienting AI development toward systems that are provably beneficial to humans, establishing CHAI as a hub for AI safety discourse.<ref>{{cite web |url=http://humancompatible.ai/workshop-2017/ |title=Center for Human-Compatible AI |accessdate=February 9, 2018 |archiveurl=https://archive.is/pXQ4q |archivedate=February 9, 2018 |dead-url=no}}</ref> | ||

| + | |- | ||

| + | | 2017 || {{dts|May 28}} || Publication || The paper "Should Robots be Obedient?" by Smitha Milli, Dylan Hadfield-Menell, Anca Dragan, and Stuart Russell is uploaded to arXiv. The research explores the risks of rigid obedience in AI systems and proposes strategies for balancing compliance with ethical considerations, contributing significantly to the AI alignment discourse.<ref>{{cite web |url=https://arxiv.org/abs/1705.09990 |title=[1705.09990] Should Robots be Obedient? |accessdate=December 15, 2024}}</ref><ref>{{cite web |url=https://effective-altruism.com/ea/1iu/2018_ai_safety_literature_review_and_charity/ |title=2018 AI Safety Literature Review and Charity Comparison |publisher=Effective Altruism Forum |accessdate=December 15, 2024}}</ref> | ||

| + | |- | ||

| + | | 2017 || {{dts|June}} || Website || CHAI updates its official website to include workshop proceedings, publications, and a dedicated section for recommended AI safety resources, enhancing accessibility for the research community.<ref>{{cite web |url=http://humancompatible.ai |title=Center for Human-Compatible AI Official Website |accessdate=December 15, 2024}}</ref> | ||

| + | |- | ||

| + | | 2017 || {{dts|July}} || Publication || CHAI researchers release a preliminary report on "Reward Modeling for Scalable AI Alignment." This internal document outlines strategies for designing reward systems that accurately capture human intentions, laying groundwork for future research.<ref>{{cite web |url=http://humancompatible.ai/research |title=Research at CHAI |accessdate=December 15, 2024}}</ref> | ||

| + | |- | ||

| + | | 2017 || {{dts|October}} || Staff Transition || Rosie Campbell transitions from BBC R&D to join CHAI as Assistant Director. Her work focuses on expanding CHAI’s operations and enhancing collaborations with global AI safety organizations.<ref>{{cite web |url=https://www.bbc.co.uk/rd/people/rosie-campbell?Type=Posts&Decade=All |title=Rosie Campbell - BBC R&D |accessdate=May 11, 2018 |archiveurl=https://archive.is/2o0RJ |archivedate=May 11, 2018 |dead-url=no |quote=Rosie left in October 2017 to take on the role of Assistant Director of the Center for Human-Compatible AI at UC Berkeley, a research group which aims to ensure that artificially intelligent systems are provably beneficial to humans.}}</ref> | ||

| + | |- | ||

| + | | 2018 || {{dts|February 1}} || Publication || Joseph Halpern publishes Information Acquisition Under Resource Limitations in a Noisy Environment, contributing to AI decision-making research under constraints. The paper explores how agents can optimize their decision processes with limited data and noisy environments, providing insights relevant to robust AI system design.<ref>{{cite web|url=https://arxiv.org |title=Information Acquisition Under Resource Limitations in a Noisy Environment |date=February 1, 2018 |accessdate=September 30, 2024}}</ref> | ||

| + | |- | ||

| + | | 2018 || {{dts|February 7}} || Media Mention || Anca Dragan, a CHAI researcher, is featured in Forbes for her pioneering work on value alignment and ethical AI. The article highlights her contributions to ensuring AI systems respect human preferences, advancing public understanding of AI's societal implications.<ref>{{cite web|url=https://www.forbes.com |title=Anca Dragan on AI Value Alignment |date=February 7, 2018 |accessdate=September 30, 2024}}</ref> | ||

| + | |- | ||

| + | | 2018 || {{dts|February 26}} || Conference || Anca Dragan presents Expressing Robot Incapability at the ACM/IEEE International Conference on Human-Robot Interaction. The presentation focuses on robots effectively communicating their limitations to humans, advancing trust and transparency in human-robot collaboration.<ref>{{cite web|url=https://www.humanrobotinteraction.org/ |title=Expressing Robot Incapability |publisher=ACM/IEEE International Conference |date=February 26, 2018 |accessdate=September 30, 2024}}</ref> | ||

|- | |- | ||

| − | + | | 2018 || {{dts|March}} || Expansion || BERI broadens its support beyond CHAI to include organizations like the Machine Intelligence Research Institute (MIRI). Despite this expansion, BERI remains a key operational partner for CHAI, ensuring smooth logistics and enabling focused AI safety research.<ref>{{cite web |url=https://intelligence.org/2018/03/25/march-2018-newsletter/ |title=March 2018 Newsletter - Machine Intelligence Research Institute |publisher=Machine Intelligence Research Institute |date=March 25, 2018 |accessdate=December 15, 2024}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|March}} || Research Leadership || Andrew Critch transitions from MIRI to CHAI as its first research scientist. Critch focuses on foundational alignment problems and helps define CHAI's long-term research direction.<ref>{{cite web |url=https://intelligence.org/2018/03/25/march-2018-newsletter/ |title=March 2018 Newsletter - Machine Intelligence Research Institute |publisher=Machine Intelligence Research Institute |date=March 25, 2018 |accessdate=December 15, 2024}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|March 8}} || Publication || Anca Dragan and colleagues publish Learning from Physical Human Corrections, One Feature at a Time. The paper examines how robots can learn collaboratively from humans, improving performance through physical interaction.<ref>{{cite web|url=https://arxiv.org |title=Learning from Physical Human Corrections, One Feature at a Time |date=March 8, 2018 |accessdate=September 30, 2024}}</ref> | |

| − | | 2018 || {{dts|March 8}} || Publication || Anca Dragan and | ||

| − | |||

|- | |- | ||

| − | | 2018 || {{ | + | | 2018 || {{dts|April 4}}–12 || Organization || CHAI updates its branding, introducing a new logo with a green background and white "CHAI" lettering. The rebranding aims to reinforce its identity as a leader in AI alignment research.<ref>{{cite web |url=http://humancompatible.ai/ |title=Center for Human-Compatible AI |accessdate=May 10, 2018 |archiveurl=https://web.archive.org/web/20180404185432/http://humancompatible.ai/ |archivedate=April 4, 2018 |dead-url=yes}}</ref> |

|- | |- | ||

| − | | 2018 || {{dts|April | + | | 2018 || {{dts|April 9}} || Publication || The Alignment Newsletter is publicly launched by CHAI affiliate Rohin Shah. This weekly newsletter consolidates AI safety updates, making it an essential resource for researchers and enthusiasts.<ref>{{cite web |url=https://www.lesswrong.com/posts/RvysgkLAHvsjTZECW/announcing-the-alignment-newsletter |title=Announcing the Alignment Newsletter |date=April 9, 2018 |first=Rohin |last=Shah |accessdate=May 10, 2018}}</ref> |

|- | |- | ||

| − | | 2018 || {{ | + | | 2018 || {{dts|April 28}}–29 || Workshop || CHAI's second annual workshop convenes researchers, industry experts, and policymakers to discuss advances in AI alignment. Key topics include reward modeling, interpretability, and cooperative AI systems.<ref>{{cite web |url=http://humancompatible.ai/workshop-2018 |title=Center for Human-Compatible AI Workshop 2018 |accessdate=February 9, 2018 |archiveurl=https://archive.is/XcxCZ |archivedate=February 9, 2018}}</ref> |

|- | |- | ||

| − | | 2018 || {{dts| | + | | 2018 || {{dts|July 2}} || Publication || Thomas Krendl Gilbert publishes A Broader View on Bias in Automated Decision-Making at ICML 2018. The work critiques bias in AI systems and offers strategies for promoting fairness and ethical standards in automated decisions.<ref>{{cite web|url=https://arxiv.org |title=A Broader View on Bias in Automated Decision-Making |publisher=ICML 2018 |date=July 2, 2018}}</ref> |

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|July 13}} || Workshop || Daniel Filan presents Exploring Hierarchy-Aware Inverse Reinforcement Learning at the 1st Workshop on Goal Specifications for Reinforcement Learning. The work advances understanding of how AI systems align with complex, hierarchical human goals.<ref>{{cite web|url=https://goal-spec.rlworkshop.com/ |title=Exploring Hierarchy-Aware Inverse Reinforcement Learning |publisher=Goal Specifications Workshop |date=July 13, 2018}}</ref> | |

| − | | 2018 || {{dts|July | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|August 26}} || Workshop || CHAI students participate in MIRI’s AI Alignment Workshop. The event addresses critical AI safety challenges, fostering collaboration among AI researchers and practitioners.<ref>{{cite web|url=https://intelligence.org |title=MIRI AI Alignment Workshop |publisher=Machine Intelligence Research Institute |date=August 26, 2018}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|September 4}} || Conference || Jaime Fisac presents research on robust AI interactions at three conferences, focusing on ensuring AI systems behave predictably in dynamic, uncertain environments.<ref>{{cite web|url=https://www.chai.berkeley.edu |title=Jaime Fisac AI Safety Research |publisher=CHAI |date=September 4, 2018}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|October 31}} || Recognition || Rosie Campbell is named one of the Top Women in AI Ethics on Social Media by Mia Dand. The recognition highlights her leadership in promoting ethical AI development.<ref>{{cite web|url=https://lighthouse3.com |title=Top Women in AI Ethics |publisher=Lighthouse3 |date=October 31, 2018}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|December}} || Conference || CHAI researchers present their findings at NeurIPS 2018, engaging in discussions on AI policy, safety, and interpretability.<ref>{{cite web|url=https://neurips.cc |title=NeurIPS 2018 Conference |publisher=NeurIPS |date=December 2018}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|December}} || Podcast || Rohin Shah discusses Inverse Reinforcement Learning on the AI Alignment Podcast by the Future of Life Institute. His insights advance public understanding of technical AI alignment challenges.<ref>{{cite web|url=https://futureoflife.org |title=AI Alignment Podcast: Rohin Shah |publisher=Future of Life Institute |date=December 2018}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2018 || {{dts|December}} || Media Mention || Stuart Russell and Rosie Campbell appear in a Vox article on AI existential risks. They emphasize the need for stringent AI safety measures to mitigate potential harm.<ref>{{cite web|url=https://www.vox.com |title=The Case for Taking AI Seriously as a Threat to Humanity |publisher=Vox |date=December 2018}}</ref> | |

| − | | 2018 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|January}} || Recognition || Stuart Russell receives the AAAI Feigenbaum Prize for his pioneering work in AI research and policy. His contributions to probabilistic reasoning and AI alignment further enhance CHAI's reputation as a leader in AI safety.<ref>{{cite web |url=https://humancompatible.ai/news/2019/01/15/russel_prize/ |title=Stuart Russell Receives AAAI Feigenbaum Prize |website=CHAI |date=January 15, 2019 |accessdate=December 15, 2024}}</ref> | |

| − | | | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|January 8}} || Talks || Rosie Campbell delivers public talks at San Francisco and East Bay AI Meetups, discussing neural networks and CHAI's approach to AI safety. These talks foster broader community engagement with CHAI’s research.<ref>{{cite web|url=https://humancompatible.ai/news/2019/01/08/Rosie_Demystify/ |title=Rosie Campbell Speaks About AI Safety and Neural Networks at San Francisco and East Bay AI Meetups |website=CHAI |date=January 8, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|January 17}} || Conference || CHAI faculty present multiple papers at AAAI 2019, covering topics such as deception in security games, ethical implications of AI systems, and advancements in multi-agent reinforcement learning. Their contributions emphasize AI's ethical deployment and its societal impact.<ref>{{cite web|url=https://humancompatible.ai/news/2019/01/17/CHAI_AAAI2019/ |title=CHAI Papers at the AAAI 2019 Conference |website=CHAI |date=January 17, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|January 20}} || Publication || Alex Turner, a former CHAI intern, wins the AI Alignment Prize for his work on "penalizing impact via attainable utility preservation." This research offers a novel framework for regulating AI behavior to minimize unintended harm.<ref>{{cite web|url=https://humancompatible.ai/news/2019/01/20/turner_prize/ |title=Former CHAI Intern Wins AI Alignment Prize |website=CHAI |date=January 20, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts|January | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|January 29}} || Conference || At ACM FAT* 2019, Smitha Milli and Anca Dragan present research addressing the ethical implications of AI transparency and fairness. Their work highlights the importance of accountability in automated decision-making systems.<ref>{{cite web|url=https://humancompatible.ai/news/2019/01/29/CHAI_FAT2019/ |title=CHAI Papers at FAT* 2019 |website=CHAI |date=January 29, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts|January | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|June 15}} || Conference || At ICML 2019, CHAI researchers, including Rohin Shah, Pieter Abbeel, and Anca Dragan, present research on human-AI coordination and addressing biases in AI reward inference, advancing scalable solutions for alignment.<ref>{{cite web|url=https://humancompatible.ai/news/2019/06/15/talks_icml_2019/ |title=CHAI Presentations at ICML |website=CHAI |date=June 15, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|July 5}} || Publication || CHAI releases an open-source imitation learning library developed by Steven Wang, Adam Gleave, and Sam Toyer. The library provides benchmarks for algorithms like GAIL and AIRL, advancing research in behavior modeling.<ref>{{cite web|url=https://humancompatible.ai/news/2019/07/05/imitation_learning_library/ |title=CHAI Releases Imitation Learning Library |website=CHAI |date=July 5, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|July 5}} || Research Summary || Rohin Shah publishes an analysis of CHAI's work on human biases in reward inference. This summary offers key insights into how AI systems can align their decision-making with nuanced human behavior.<ref>{{cite web|url=https://humancompatible.ai/news/2019/07/05/rohin_blogposts/ |title=Rohin Shah Summarizes CHAI's Research on Learning Human Biases |website=CHAI |date=July 5, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|August 15}} || Media Publication || Mark Nitzberg authors an article in WIRED advocating for an “FDA for algorithms,” calling for stricter regulatory oversight of AI development to enhance safety and transparency.<ref>{{cite web|url=https://www.wired.com/story/fda-for-algorithms/ |title=Mark Nitzberg Writes in WIRED on the Need for an FDA for Algorithms |website=WIRED |date=August 15, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|August 28}} || Paper Submission || Thomas Krendl Gilbert submits The Passions and the Reward Functions: Rival Views of AI Safety? to FAT*2020. The paper explores philosophical perspectives on aligning AI reward systems with human emotions.<ref>{{cite web|url=https://humancompatible.ai/news/2019/08/28/gilbert_paper/ |title=Thomas Krendl Gilbert Submits Paper on Philosophical AI Safety |website=CHAI |date=August 28, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2019 || {{dts|September 28}} || Newsletter || Rohin Shah expands the AI Alignment Newsletter, transforming it into a vital resource for updates on the latest AI safety research, widely regarded as essential for researchers in the field.<ref>{{cite web|url=https://humancompatible.ai/news/2019/09/28/newsletter_update/ |title=Rohin Shah Expands the AI Alignment Newsletter |website=CHAI |date=September 28, 2019 |accessdate=October 7, 2024}}</ref> | |

| − | | 2019 || {{dts| | ||

| − | |||

|- | |- | ||

| − | | 2019 || {{dts| | + | | 2019 || {{dts|November}} || Funding || Open Philanthropy recommends a $705,000 grant over two years to the Berkeley Existential Risk Initiative (BERI) to support its continued collaboration with the Center for Human-Compatible AI (CHAI). The funding provides resources for machine learning researchers at CHAI and bolsters initiatives focused on addressing long-term risks from advanced AI systems. By alleviating operational constraints and enabling targeted technical research, the grant strengthens CHAI's capacity to explore AI alignment challenges and develop "provably beneficial" AI systems, aligning with Open Philanthropy’s commitment to safeguarding humanity's future against existential risks. |

| − | + | <ref>{{cite web |url=https://www.goodventures.org/our-portfolio/grants/berkeley-existential-risk-initiative-chai-collaboration-2019/ |title=Open Philanthropy Grant for BERI-CHAI Collaboration |publisher=Good Ventures |date=November 2019 |accessdate=December 17, 2024}}</ref> | |

|- | |- | ||

| − | + | | 2020 || {{dts|May 30}} (submission), June 11 (date in paper) || Publication || The paper "AI Research Considerations for Human Existential Safety (ARCHES)" by Andrew Critch of the Center for Human-Compatible AI (CHAI) and David Krueger of the Montreal Institute for Learning Algorithms (MILA) is uploaded to the ArXiV.<ref>{{cite web|url = https://arxiv.org/abs/2006.04948|title = AI Research Considerations for Human Existential Safety (ARCHES)|date = May 30, 2020|accessdate = June 11, 2020}}</ref> MIRI's July 2020 newsletter calls it "a review of 29 AI (existential) safety research directions, each with an illustrative analogy, examples of current work and potential synergies between research directions, and discussion of ways the research approach might lower (or raise) existential risk."<ref>{{cite web|url = https://intelligence.org/2020/07/08/july-2020-newsletter/|title = July 2020 Newsletter|last = Bensinger|first = Rob|date = July 8, 2020|publisher = Machine Intelligence Research Institute}}</ref> Critch is interviewed about the paper on the AI Alignment Podcast released September 15.<ref>{{cite web|url = https://futureoflife.org/2020/09/15/andrew-critch-on-ai-research-considerations-for-human-existential-safety/|title = Andrew Critch on AI Research Considerations for Human Existential Safety|date = September 15, 2020|accessdate = December 20, 2020|publisher = Future of Life Institute}}</ref> | |

| − | | | ||

| − | |||

|- | |- | ||

| − | + | | 2020 || {{dts|June 1}} || Workshop || CHAI holds its first virtual workshop in response to the COVID-19 pandemic. The event gathers 150 participants from the AI safety community, featuring discussions on reducing existential risks from advanced AI, fostering collaborations, and advancing research initiatives.<ref>{{cite web|url=https://humancompatible.ai/news/2020/06/01/first-virtual-workshop/ |title=CHAI Holds Its First Virtual Workshop |website=CHAI |date=June 1, 2020 |accessdate=October 7, 2024}}</ref> | |

| − | | | ||

|- | |- | ||

| − | + | | 2020 || {{dts|September 1}} || Staff || CHAI welcomes six new PhD students: Yuxi Liu, Micah Carroll, Cassidy Laidlaw, Alex Gunning, Alyssa Dayan, and Jessy Lin. These students, advised by Principal Investigators, bring expertise in areas like mathematics, AI-human cooperation, and safety mechanisms, furthering CHAI’s research depth.<ref>{{cite web|url=https://humancompatible.ai/news/2020/09/01/six-new-phd-students-join-chai/ |title=Six New PhD Students Join CHAI |website=CHAI |date=September 1, 2020 |accessdate=October 7, 2024}}</ref> | |

| − | | 2020 || {{dts| | ||

| − | |||

|- | |- | ||

| − | | 2020 || {{dts|September | + | | 2020 || {{dts|September 10}} || Publication || CHAI PhD student Rachel Freedman publishes two papers at IJCAI-20 workshops. Choice Set Misspecification in Reward Inference investigates errors in robot reward inference, while Aligning with Heterogeneous Preferences for Kidney Exchange explores preference aggregation for optimizing kidney exchange programs, showcasing CHAI's practical AI safety applications.<ref>{{cite web|url=https://humancompatible.ai/news/2020/09/10/rachel-freedman-papers-ijcai/ |title=IJCAI-20 Accepts Two Papers by CHAI PhD Student Rachel Freedman |website=CHAI |date=September 10, 2020 |accessdate=October 7, 2024}}</ref> |

| − | |||

|- | |- | ||

| − | | 2020 || {{dts| | + | | 2020 || {{dts|October 10}} || Publication || Brian Christian publishes The Alignment Problem: Machine Learning and Human Values, a comprehensive examination of AI safety challenges and advancements. The book highlights CHAI’s contributions to the field, including technical progress and ethical considerations.<ref>{{cite web|url=https://humancompatible.ai/news/2020/10/10/alignment-problem-published/ |title=Brian Christian Publishes The Alignment Problem |website=CHAI |date=October 10, 2020 |accessdate=October 7, 2024}}</ref> |

| − | |||

|- | |- | ||

| − | + | | 2020 || {{dts|October 21}} || Workshop || CHAI hosts a virtual launch event for Brian Christian’s book The Alignment Problem. The event includes an interview with the author, moderated by journalist Nora Young, and an audience Q&A session, focusing on AI safety and ethical frameworks.<ref>{{cite web|url=https://humancompatible.ai/news/2020/11/05/book-launch-alignment-problem/ |title=Watch the Book Launch of The Alignment Problem in Conversation with Brian Christian and Nora Young |website=CHAI |date=November 5, 2020 |accessdate=October 7, 2024}}</ref> | |

| − | | 2020 || {{dts|October | ||

| − | |||

|- | |- | ||

| − | + | | 2020 || {{dts|November 12}} || Internship || CHAI opens applications for its 2021 research internship program, offering mentorship opportunities in AI safety research. Interns participate in seminars, workshops, and hands-on projects. Application deadlines are set for November 23 (early) and December 13 (final).<ref>{{cite web|url=https://humancompatible.ai/news/2020/11/12/internship-applications/ |title=CHAI Internship Application Is Now Open |website=CHAI |date=November 12, 2020 |accessdate=October 7, 2024}}</ref> | |

| − | | 2020 || {{dts| | ||

| − | |||

|- | |- | ||

| − | + | | 2020 || {{dts|December}} || Funding || The Survival and Flourishing Fund (SFF) awards $247,000 to BERI to support its collaboration with the Center for Human-Compatible AI (CHAI). This funding enables BERI to provide operational and logistical support for CHAI’s AI alignment research, streamlining administrative processes and allowing CHAI researchers to focus on advancing key technical challenges in AI safety. The collaboration highlights a growing recognition of the importance of coordinated efforts to mitigate existential risks from advanced AI systems, with BERI acting as a bridge to facilitate smooth research operations. | |

| − | | 2020 || {{dts| | + | <ref>{{cite web |url=https://humancompatible.ai/news/2020/12/20/chai-beri-donations/ |title=SFF Donation to BERI for CHAI Collaboration |publisher=CHAI |date=December 20, 2020 |accessdate=December 17, 2024}}</ref> |

| − | |||

|- | |- | ||

| − | + | | 2020 || {{dts|December 20}} || Financial || The Survival and Flourishing Fund (SFF) donates $799,000 to CHAI and $247,000 to BERI, supporting their collaborative efforts in AI safety research. The funding bolsters initiatives aimed at improving humanity’s long-term survival prospects through existential risk mitigation.<ref>{{cite web|url=https://humancompatible.ai/news/2020/12/20/chai-beri-donations/ |title=CHAI and BERI Receive Donations |website=CHAI |date=December 20, 2020 |accessdate=October 7, 2024}}</ref> | |

| − | | 2020 || {{dts|December 20}} || Financial || The Survival and Flourishing Fund (SFF) donates $799,000 to CHAI and $247,000 to | ||

| − | |||

|- | |- | ||

| Line 185: | Line 152: | ||

|- | |- | ||

| + | | 2021 || {{dts|January 25}} || Podcast || Michael Dennis appears on the TalkRL podcast, discussing reinforcement learning, AI safety, and the challenges in creating safe and reliable AI systems. Dennis addresses the complexities of reward design and behavior modeling to align AI with human objectives.<ref>{{cite web|url=https://www.talkrl.com/episodes/michael-dennis |title=Michael Dennis |website=TalkRL Podcast |date=January 25, 2021 |accessdate=October 7, 2024}}</ref> | ||

| + | |- | ||

| 2021 || {{dts|February 5}} || Publication || Thomas Krendl Gilbert releases the paper "AI Development for the Public Interest: From Abstraction Traps to Sociotechnical Risks" at IEEE ISTAS20. The paper critiques limited abstraction in AI research and advocates for integrating social context and ethical considerations into AI systems development.<ref>{{cite web|url=https://humancompatible.ai/news/2021/02/05/thomas-gilbert-published-in-ieee-istas20/ |title=Tom Gilbert Published in IEEE ISTAS20 |website=Center for Human-Compatible AI |date=February 5, 2021 |accessdate=October 7, 2024}}</ref> | | 2021 || {{dts|February 5}} || Publication || Thomas Krendl Gilbert releases the paper "AI Development for the Public Interest: From Abstraction Traps to Sociotechnical Risks" at IEEE ISTAS20. The paper critiques limited abstraction in AI research and advocates for integrating social context and ethical considerations into AI systems development.<ref>{{cite web|url=https://humancompatible.ai/news/2021/02/05/thomas-gilbert-published-in-ieee-istas20/ |title=Tom Gilbert Published in IEEE ISTAS20 |website=Center for Human-Compatible AI |date=February 5, 2021 |accessdate=October 7, 2024}}</ref> | ||

| Line 191: | Line 160: | ||

| 2021 || {{dts|February 9}} || Debate || Stuart Russell debates Melanie Mitchell on The Munk Debates. Russell discusses AI safety concerns, the urgency of AI alignment research, and the risks associated with unregulated AI development. He stresses the importance of international governance to control AI technologies safely.<ref>{{cite web|url=https://humancompatible.ai/news/2021/02/09/stuart-russell-on-the-munk-debates/ |title=Stuart Russell on The Munk Debates |website=Center for Human-Compatible AI |date=February 9, 2021 |accessdate=October 7, 2024}}</ref> | | 2021 || {{dts|February 9}} || Debate || Stuart Russell debates Melanie Mitchell on The Munk Debates. Russell discusses AI safety concerns, the urgency of AI alignment research, and the risks associated with unregulated AI development. He stresses the importance of international governance to control AI technologies safely.<ref>{{cite web|url=https://humancompatible.ai/news/2021/02/09/stuart-russell-on-the-munk-debates/ |title=Stuart Russell on The Munk Debates |website=Center for Human-Compatible AI |date=February 9, 2021 |accessdate=October 7, 2024}}</ref> | ||

| − | |||

| − | |||

| − | |||

|- | |- | ||

| Line 208: | Line 174: | ||

|- | |- | ||

| − | |||

| 2021 || {{dts|June 7}}-8 || Workshop || CHAI hosts its Fifth Annual Workshop, where researchers, students, and collaborators discuss advancements in AI safety, alignment, and research progress. The workshop addresses key challenges in AI reward modeling, interpretability, and scalable alignment techniques.<ref>{{cite web|url=https://humancompatible.ai/news/2021/06/16/fifth-annual-chai-workshop/ |title=Fifth Annual CHAI Workshop |website=Center for Human-Compatible AI |date=June 16, 2021 |accessdate=October 7, 2024}}</ref> | | 2021 || {{dts|June 7}}-8 || Workshop || CHAI hosts its Fifth Annual Workshop, where researchers, students, and collaborators discuss advancements in AI safety, alignment, and research progress. The workshop addresses key challenges in AI reward modeling, interpretability, and scalable alignment techniques.<ref>{{cite web|url=https://humancompatible.ai/news/2021/06/16/fifth-annual-chai-workshop/ |title=Fifth Annual CHAI Workshop |website=Center for Human-Compatible AI |date=June 16, 2021 |accessdate=October 7, 2024}}</ref> | ||

| Line 227: | Line 192: | ||

| 2022 || {{dts|January 18}} || Publication || Several papers were published by CHAI researchers. Tom Lenaerts and his co-authors explored "Voluntary safety commitments in AI development," suggesting that such commitments help escape over-regulation. Another paper, "Cross-Domain Imitation Learning via Optimal Transport," by Arnaud Fickinger, Stuart Russell, and others, discussed how to achieve cross-domain transfer in continuous control domains. Finally, Scott Emmons and his team published research on offline reinforcement learning, showing that simple design choices can improve empirical performance on RL benchmarks.<ref>{{cite web|url=https://humancompatible.ai/news/2022/01/18/new-papers-published/ |title=New Papers Published |website=Center for Human-Compatible AI |date=January 18, 2022 |accessdate=October 7, 2024}}</ref> | | 2022 || {{dts|January 18}} || Publication || Several papers were published by CHAI researchers. Tom Lenaerts and his co-authors explored "Voluntary safety commitments in AI development," suggesting that such commitments help escape over-regulation. Another paper, "Cross-Domain Imitation Learning via Optimal Transport," by Arnaud Fickinger, Stuart Russell, and others, discussed how to achieve cross-domain transfer in continuous control domains. Finally, Scott Emmons and his team published research on offline reinforcement learning, showing that simple design choices can improve empirical performance on RL benchmarks.<ref>{{cite web|url=https://humancompatible.ai/news/2022/01/18/new-papers-published/ |title=New Papers Published |website=Center for Human-Compatible AI |date=January 18, 2022 |accessdate=October 7, 2024}}</ref> | ||

| + | |- | ||

| + | | 2022 || {{dts|February}} || Funding || Open Philanthropy awards a $1,126,160 grant to the Berkeley Existential Risk Initiative (BERI) to enhance its collaboration with the Center for Human-Compatible AI (CHAI). The funding supports the creation of an in-house compute cluster at CHAI, a critical resource for accelerating large-scale machine learning experiments in AI alignment research. Additionally, the grant enables CHAI to hire a part-time system administrator, ensuring the smooth operation and maintenance of the new compute infrastructure. This investment reflects Open Philanthropy’s strategy to provide targeted resources for technical AI safety research, addressing computational bottlenecks that can hinder progress on solving existential risks. | ||

| + | <ref>{{cite web |url=https://www.openphilanthropy.org/grants/berkeley-existential-risk-initiative-chai-collaboration-2022/ |title=Open Philanthropy Grant for CHAI Compute Cluster |publisher=Open Philanthropy |date=February 2022 |accessdate=December 17, 2024}}</ref> | ||

|- | |- | ||

| Line 296: | Line 264: | ||

| 2024 || {{dts|August 7}} || Publication || Rachel Freedman and Wes Holliday publish a paper at the International Conference on Machine Learning (ICML) discussing how social choice theory can guide AI alignment when dealing with diverse human feedback. The paper explores approaches like reinforcement learning from human feedback and constitutional AI to better aggregate human preferences.<ref>{{cite web|url=https://humancompatible.ai/news/2024/08/07/social-choice-should-guide-ai-alignment-in-dealing-with-diverse-human-feedback/ |title=Social Choice Should Guide AI Alignment in Dealing with Diverse Human Feedback |website=CHAI |date=August 7, 2024 |accessdate=October 7, 2024}}</ref> | | 2024 || {{dts|August 7}} || Publication || Rachel Freedman and Wes Holliday publish a paper at the International Conference on Machine Learning (ICML) discussing how social choice theory can guide AI alignment when dealing with diverse human feedback. The paper explores approaches like reinforcement learning from human feedback and constitutional AI to better aggregate human preferences.<ref>{{cite web|url=https://humancompatible.ai/news/2024/08/07/social-choice-should-guide-ai-alignment-in-dealing-with-diverse-human-feedback/ |title=Social Choice Should Guide AI Alignment in Dealing with Diverse Human Feedback |website=CHAI |date=August 7, 2024 |accessdate=October 7, 2024}}</ref> | ||

| + | |} | ||

| + | |||

| + | == Research Directions == | ||

| + | |||

| + | {| class="wikitable" | ||

| + | ! Research Trajectory !! Current Directions !! Related Timeline Events | ||

| + | |- | ||

| + | | AI Alignment | ||

| + | | Developing frameworks like assistance games, reward modeling, and dynamic reward systems to align AI with human intentions and preferences. Scalable methods include inverse reinforcement learning. | ||

| + | | Publications: Off-Switch Game<ref>{{cite web |url=https://arxiv.org/abs/1611.08219 |title=The Off-Switch Game |date=November 24, 2016}}</ref>, ARCHES<ref>{{cite web |url=https://doi.org/10.1016/j.artint.2021.103526 |title=ARCHES: Assistance Games for Cooperative Human-AI Systems |date=May 2021}}</ref>, Dynamic Reward MDPs<ref>{{cite web |url=https://humancompatible.ai/news/2024/07/23/ai-alignment-with-changing-and-influenceable-reward-functions |title=AI Alignment with Changing and Influenceable Reward Functions |date=July 23, 2024}}</ref> | ||

| + | Workshops: Annual CHAI Workshops<ref>{{cite web |url=https://humancompatible.ai/news/2024/06/18/8th-annual-chai-workshop |title=8th Annual CHAI Workshop |date=June 18, 2024}}</ref>, PSBAI Workshop<ref>{{cite web |url=https://humancompatible.ai/progress-report |title=Progress Report |date=May 2023}}</ref> | ||

| + | Conferences: ICML 2019<ref>{{cite web |url=https://humancompatible.ai/news/2019/06/15/talks_icml_2019/ |title=CHAI Presentations at ICML |date=June 15, 2019}}</ref>, NeurIPS 2018<ref>{{cite web |url=https://neurips.cc |title=NeurIPS 2018 Conference |date=December 2018}}</ref> | ||

| + | |- | ||

| + | | Human Feedback and Cooperation | ||

| + | | Improving Reinforcement Learning with Human Feedback (RLHF) using methods like active teacher selection and cluster ranking for efficient learning. | ||

| + | | Publications: Learning from Human Corrections<ref>{{cite web |url=https://arxiv.org |title=Learning from Physical Human Corrections, One Feature at a Time |date=March 8, 2018}}</ref>, RLHF with ATS<ref>{{cite web |url=https://humancompatible.ai/news/2024/04/30/reinforcement-learning-with-human-feedback-and-active-teacher-selection-rlhf-and-ats/ |title=Reinforcement Learning with Human Feedback and Active Teacher Selection |date=April 30, 2024}}</ref>, Cluster Ranking<ref>{{cite web |url=https://humancompatible.ai/news/2022/11/18/time-efficient-reward-learning-via-visually-assisted-cluster-ranking/ |title=Time-Efficient Reward Learning via Visually Assisted Cluster Ranking |date=November 18, 2022}}</ref> | ||

| + | Workshops: HILL Workshop<ref>{{cite web |url=https://humancompatible.ai/news/2022/11/18/time-efficient-reward-learning-via-visually-assisted-cluster-ranking/ |title=HILL Workshop |date=2022}}</ref>, Annual CHAI Workshops<ref>{{cite web |url=https://humancompatible.ai/news/2024/06/18/8th-annual-chai-workshop |title=Annual CHAI Workshops |date=June 18, 2024}}</ref> | ||

| + | Competitions: NeurIPS BASALT (2021)<ref>{{cite web |url=https://humancompatible.ai/news/2021/07/09/neurips-minerl-basalt-competition-launches/ |title=NeurIPS MineRL BASALT Competition |date=July 9, 2021}}</ref> | ||

| + | |- | ||

| + | | AI Policy and Governance | ||

| + | | Promoting AI regulation frameworks, such as an FDA for algorithms, and advocating for safe global AI deployment through public talks and media. | ||

| + | | Talks: Stuart Russell TED Talk<ref>{{cite web |url=https://www.ted.com/talks/stuart_russell_how_ai_might_make_us_better_humans |title=How AI Might Make Us Better Humans |date=November 2016}}</ref>, Munk Debates<ref>{{cite web |url=https://humancompatible.ai/news/2021/02/09/stuart-russell-on-the-munk-debates/ |title=Stuart Russell on The Munk Debates |date=February 9, 2021}}</ref> | ||

| + | Media: WIRED (FDA for algorithms)<ref>{{cite web |url=https://www.wired.com/story/fda-for-algorithms/ |title=FDA for Algorithms |date=August 15, 2019}}</ref>, Vox articles<ref>{{cite web |url=https://www.vox.com |title=The Case for Taking AI Seriously as a Threat to Humanity |date=December 2018}}</ref> | ||

| + | Recognition: TIME 100 Most Influential (2023)<ref>{{cite web |url=https://humancompatible.ai/news/2023/09/18/100-most-influential-people-in-ai/ |title=TIME 100 Most Influential People in AI |date=September 18, 2023}}</ref> | ||

| + | |- | ||

| + | | Ethics and Societal Impact | ||

| + | | Researching fairness, bias, and ethical risks in AI systems, including competence-aware systems and balancing AI obedience with safety. | ||

| + | | Publications: Should Robots Be Obedient?<ref>{{cite web |url=https://arxiv.org/abs/1705.09990 |title=Should Robots Be Obedient? |date=May 28, 2017}}</ref>, Bias in AI Systems<ref>{{cite web |url=https://arxiv.org |title=Bias in Automated Systems |date=July 2, 2018}}</ref>, Competence-Aware Systems<ref>{{cite web |url=https://humancompatible.ai/news/2022/12/12/competence-aware-systems/ |title=Competence-Aware Systems |date=December 12, 2022}}</ref> | ||

| + | Conferences: FAT* 2019<ref>{{cite web |url=https://humancompatible.ai/news/2019/01/29/CHAI_FAT2019/ |title=FAT* 2019 |date=January 29, 2019}}</ref>, AAAI 2021<ref>{{cite web |url=https://humancompatible.ai/news/2021/03/18/chai-faculty-and-affiliates-publish-at-aaai-2021/ |title=AAAI 2021 |date=March 18, 2021}}</ref> | ||

| + | Recognition: Rosie Campbell Top Women in AI Ethics<ref>{{cite web |url=https://lighthouse3.com |title=Top Women in AI Ethics |date=October 31, 2018}}</ref> | ||

| + | |- | ||

| + | | Operational Support and Collaboration | ||

| + | | Expanding capacity through partnerships and funding to scale research and provide compute infrastructure. | ||

| + | | Financial: Open Philanthropy Grants<ref>{{cite web |url=https://www.openphilanthropy.org/grants/berkeley-existential-risk-initiative-chai-collaboration-2022/ |title=Open Philanthropy Grant |date=February 2022}}</ref>, SFF Donations<ref>{{cite web |url=https://humancompatible.ai/news/2020/12/20/chai-beri-donations/ |title=SFF Donations |date=December 20, 2020}}</ref> | ||

| + | Collaborations: BERI<ref>{{cite web |url=https://existence.org/ |title=BERI Partnership |date=2017}}</ref>, MIRI<ref>{{cite web |url=https://intelligence.org/2018/03/25/march-2018-newsletter/ |title=MIRI Newsletter |date=March 2018}}</ref> | ||

| + | Internal Reviews: CHAI Progress Reports (2022)<ref>{{cite web |url=https://humancompatible.ai/progress-report |title=Progress Report |date=May 2023}}</ref> | ||

|} | |} | ||

| Line 302: | Line 306: | ||

=== Google Scholar === | === Google Scholar === | ||

| − | The following table summarizes per-year mentions on Google Scholar as of | + | The following table summarizes per-year mentions on Google Scholar as of November 27th, 2024. |

{| class="sortable wikitable" | {| class="sortable wikitable" | ||

| Line 327: | Line 331: | ||

[[File:Center for Human-Compatible AI gscho.png|thumb|center|700px]] | [[File:Center for Human-Compatible AI gscho.png|thumb|center|700px]] | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

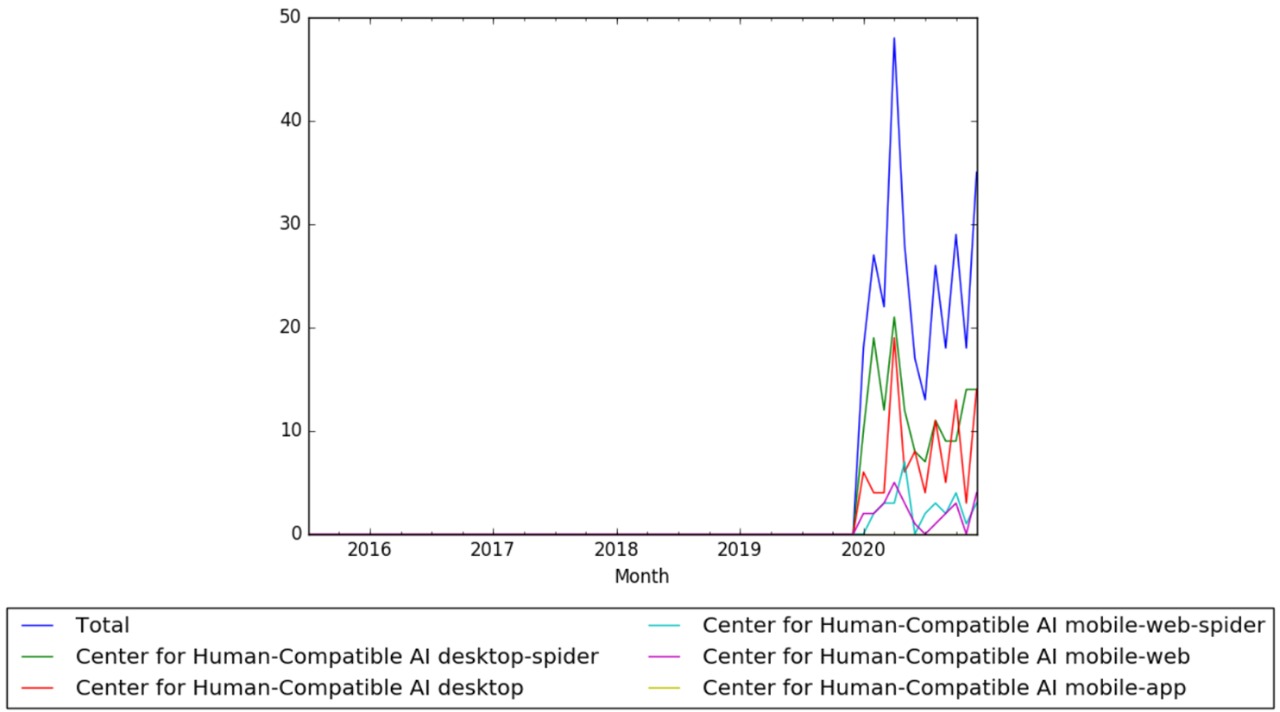

=== Wikipedia Views === | === Wikipedia Views === | ||

Latest revision as of 10:20, 17 December 2024

This is a timeline of Center for Human-Compatible AI (CHAI).

Contents

Big picture

| Time period | Development summary | More details |

|---|---|---|

| 2016–2021 | CHAI is established and grows | CHAI is established in 2016, begins producing research, and rapidly becomes a leading institution in AI safety. During this period, CHAI hosts numerous workshops, publishes key papers on AI alignment, and gains recognition within the AI safety community. Several PhD students join, and notable collaborations are formed with other institutions. |

| 2021–2024 | Continued expansion and recognition | From 2021 onwards, CHAI continues to expand its research efforts and impact. This period includes increased international recognition, major contributions to AI policy discussions, participation in global conferences, and the establishment of new research initiatives focused on AI-human collaboration and safety. CHAI's researchers receive prestigious awards, and the institution solidifies its role as a thought leader in AI alignment. |

Full timeline

Here are the inclusion criteria for various event types in the timeline related to CHAI (Center for Human-Compatible AI):

- Publication: The intention is to include the most notable publications. These are typically those that have been highlighted by CHAI itself or have gained recognition in the broader AI safety community or media. Given the large volume of research papers, only papers that have significant impact, such as being accepted at prominent conferences or being discussed widely, are included.

- Website: The intention is to include all websites directly associated with CHAI or initiatives that CHAI is heavily involved in. This includes new websites launched by CHAI or collaborative websites for joint projects.

- Staff: The intention is to include significant changes or additions to CHAI staff, particularly new PhD students, research fellows, senior scientists, and leadership positions. Promotions and transitions in key roles are also included.

- Workshop: All workshops organized or hosted by CHAI are included, particularly those that focus on advancing AI safety, alignment research, or collaborations within the AI research community. Virtual and in-person events count equally if organized by CHAI.

- Conference: All conferences where CHAI organizes or leads are included. Conferences where CHAI staff give significant presentations, lead discussions, or organize major sessions are also featured.

- Internal Review: This includes annual or progress reports published by CHAI, summarizing achievements, research outputs, and strategic directions. These reports provide a comprehensive review of the organization's work over a given period.

- External Review: Includes substantive reviews of CHAI's work by external bodies or media. Only reviews that treat CHAI or its researchers in significant detail are included.

- Financial: The inclusion focuses on large donations or funding announcements of over $10,000. Funding that supports major initiatives or collaborations that advance AI safety research is highlighted.

- Staff Recognition: This includes awards, honors, and recognitions received by CHAI researchers for their contributions to AI safety or AI ethics. Recognitions such as TIME’s 100 Most Influential People or prestigious fellowships are included.

- Social Media: Significant milestones such as new social media account creations for CHAI or major social media events (like Reddit AMAs) hosted by CHAI-affiliated researchers are included.

- Project Announcement or Initiatives: Major projects, initiatives, or research programs launched by CHAI that are aimed at advancing AI alignment, existential risk mitigation, or improving AI-human cooperation are included. These announcements highlight new directions or collaborative efforts in AI safety.

- Collaboration: Includes significant collaborations with other institutions where CHAI plays a major role, such as co-authoring reports, leading joint research projects, or providing advisory roles. Collaborations aimed at policy, safety, or alignment are particularly relevant.

| Year | Month and date | Event type | Details |

|---|---|---|---|

| 2016 | August | Founding Vision | Stuart Russell, renowned AI researcher and co-author of Artificial Intelligence: A Modern Approach, establishes CHAI at UC Berkeley. Russell emphasizes the need to shift the field toward developing AI systems that are "provably beneficial" and aligned with human values.[1] |

| 2016 | August | Founding Members | CHAI's founding team includes Stuart Russell, Andrew Critch (co-founder of CFAR), and Anca Dragan (expert in human-robot interaction). Their expertise lays the groundwork for interdisciplinary research in AI alignment and long-term safety.[2] |

| 2016 | August | Website | CHAI launches its official website to serve as a hub for resources, updates, and publications related to AI safety and alignment. The site includes research priorities, key collaborators, and recommended reading for the AI alignment community.[3] |

| 2016 | September | Financial | CHAI secures a $5.6 million grant from the Open Philanthropy Project. This significant funding enables early research projects, the recruitment of staff, and operational infrastructure.[4] |

| 2016 | October | Collaboration | CHAI partners with the Machine Intelligence Research Institute (MIRI) and the Center for Applied Rationality (CFAR). These collaborations focus on interdisciplinary research to address existential risks and foster AI alignment methodologies.[5] |

| 2016 | November | Outreach | Stuart Russell delivers a widely viewed TED Talk on the risks of poorly designed AI objectives, promoting CHAI’s mission to align AI with human values. The talk highlights potential misalignment issues and the necessity for AI safety measures.[6] |

| 2016 | November 24 | Publication | "The Off-Switch Game," co-authored by Dylan Hadfield-Menell, Anca Dragan, Pieter Abbeel, and Stuart Russell, is uploaded to arXiv. This influential paper introduces strategies for designing AI systems that allow safe human intervention, marking a pivotal contribution to AI safety.[7] |

| 2016 | December | Resources | CHAI publishes its annotated bibliography of recommended readings, offering curated resources on AI safety and alignment. This document becomes a cornerstone for guiding new researchers in the field.[8] |

| 2016 | December | Operational Support | The Berkeley Existential Risk Initiative (BERI) begins providing operational support to CHAI. BERI assists with grant management, workshop logistics, and infrastructure development, enabling researchers to focus on advancing AI safety.[9] |

| 2017 | March | Collaboration | The Berkeley Existential Risk Initiative (BERI) formalizes its partnership with CHAI, providing operational support for grant management, workshops, and recruitment. This collaboration enables CHAI to expand its research capacity and host interdisciplinary events focused on AI alignment and safety.[10] |

| 2017 | July | Funding | Open Philanthropy recommends a grant of $403,890 to BERI to support its collaboration with CHAI at UC Berkeley, helping hire contractors and part-time employees for tasks such as web development and event coordination. |

| 2017 | May | Team Expansion | Rosie Campbell joins CHAI as Assistant Director, leveraging her expertise in AI ethics and program management. She plays a critical role in organizing CHAI’s first annual workshop and fostering collaborations between academic and industry partners.[12] |

| 2017 | May 5–6 | Workshop | CHAI hosts its inaugural annual workshop, bringing together researchers and practitioners to discuss challenges in AI alignment. The event focuses on reorienting AI development toward systems that are provably beneficial to humans, establishing CHAI as a hub for AI safety discourse.[13] |

| 2017 | May 28 | Publication | The paper "Should Robots be Obedient?" by Smitha Milli, Dylan Hadfield-Menell, Anca Dragan, and Stuart Russell is uploaded to arXiv. The research explores the risks of rigid obedience in AI systems and proposes strategies for balancing compliance with ethical considerations, contributing significantly to the AI alignment discourse.[14][15] |

| 2017 | June | Website | CHAI updates its official website to include workshop proceedings, publications, and a dedicated section for recommended AI safety resources, enhancing accessibility for the research community.[16] |

| 2017 | July | Publication | CHAI researchers release a preliminary report on "Reward Modeling for Scalable AI Alignment." This internal document outlines strategies for designing reward systems that accurately capture human intentions, laying groundwork for future research.[17] |

| 2017 | October | Staff Transition | Rosie Campbell transitions from BBC R&D to join CHAI as Assistant Director. Her work focuses on expanding CHAI’s operations and enhancing collaborations with global AI safety organizations.[18] |

| 2018 | February 1 | Publication | Joseph Halpern publishes Information Acquisition Under Resource Limitations in a Noisy Environment, contributing to AI decision-making research under constraints. The paper explores how agents can optimize their decision processes with limited data and noisy environments, providing insights relevant to robust AI system design.[19] |

| 2018 | February 7 | Media Mention | Anca Dragan, a CHAI researcher, is featured in Forbes for her pioneering work on value alignment and ethical AI. The article highlights her contributions to ensuring AI systems respect human preferences, advancing public understanding of AI's societal implications.[20] |

| 2018 | February 26 | Conference | Anca Dragan presents Expressing Robot Incapability at the ACM/IEEE International Conference on Human-Robot Interaction. The presentation focuses on robots effectively communicating their limitations to humans, advancing trust and transparency in human-robot collaboration.[21] |

| 2018 | March | Expansion | BERI broadens its support beyond CHAI to include organizations like the Machine Intelligence Research Institute (MIRI). Despite this expansion, BERI remains a key operational partner for CHAI, ensuring smooth logistics and enabling focused AI safety research.[22] |

| 2018 | March | Research Leadership | Andrew Critch transitions from MIRI to CHAI as its first research scientist. Critch focuses on foundational alignment problems and helps define CHAI's long-term research direction.[23] |

| 2018 | March 8 | Publication | Anca Dragan and colleagues publish Learning from Physical Human Corrections, One Feature at a Time. The paper examines how robots can learn collaboratively from humans, improving performance through physical interaction.[24] |

| 2018 | April 4–12 | Organization | CHAI updates its branding, introducing a new logo with a green background and white "CHAI" lettering. The rebranding aims to reinforce its identity as a leader in AI alignment research.[25] |

| 2018 | April 9 | Publication | The Alignment Newsletter is publicly launched by CHAI affiliate Rohin Shah. This weekly newsletter consolidates AI safety updates, making it an essential resource for researchers and enthusiasts.[26] |

| 2018 | April 28–29 | Workshop | CHAI's second annual workshop convenes researchers, industry experts, and policymakers to discuss advances in AI alignment. Key topics include reward modeling, interpretability, and cooperative AI systems.[27] |

| 2018 | July 2 | Publication | Thomas Krendl Gilbert publishes A Broader View on Bias in Automated Decision-Making at ICML 2018. The work critiques bias in AI systems and offers strategies for promoting fairness and ethical standards in automated decisions.[28] |

| 2018 | July 13 | Workshop | Daniel Filan presents Exploring Hierarchy-Aware Inverse Reinforcement Learning at the 1st Workshop on Goal Specifications for Reinforcement Learning. The work advances understanding of how AI systems align with complex, hierarchical human goals.[29] |

| 2018 | August 26 | Workshop | CHAI students participate in MIRI’s AI Alignment Workshop. The event addresses critical AI safety challenges, fostering collaboration among AI researchers and practitioners.[30] |

| 2018 | September 4 | Conference | Jaime Fisac presents research on robust AI interactions at three conferences, focusing on ensuring AI systems behave predictably in dynamic, uncertain environments.[31] |

| 2018 | October 31 | Recognition | Rosie Campbell is named one of the Top Women in AI Ethics on Social Media by Mia Dand. The recognition highlights her leadership in promoting ethical AI development.[32] |

| 2018 | December | Conference | CHAI researchers present their findings at NeurIPS 2018, engaging in discussions on AI policy, safety, and interpretability.[33] |

| 2018 | December | Podcast | Rohin Shah discusses Inverse Reinforcement Learning on the AI Alignment Podcast by the Future of Life Institute. His insights advance public understanding of technical AI alignment challenges.[34] |

| 2018 | December | Media Mention | Stuart Russell and Rosie Campbell appear in a Vox article on AI existential risks. They emphasize the need for stringent AI safety measures to mitigate potential harm.[35] |

| 2019 | January | Recognition | Stuart Russell receives the AAAI Feigenbaum Prize for his pioneering work in AI research and policy. His contributions to probabilistic reasoning and AI alignment further enhance CHAI's reputation as a leader in AI safety.[36] |

| 2019 | January 8 | Talks | Rosie Campbell delivers public talks at San Francisco and East Bay AI Meetups, discussing neural networks and CHAI's approach to AI safety. These talks foster broader community engagement with CHAI’s research.[37] |

| 2019 | January 17 | Conference | CHAI faculty present multiple papers at AAAI 2019, covering topics such as deception in security games, ethical implications of AI systems, and advancements in multi-agent reinforcement learning. Their contributions emphasize AI's ethical deployment and its societal impact.[38] |

| 2019 | January 20 | Publication | Alex Turner, a former CHAI intern, wins the AI Alignment Prize for his work on "penalizing impact via attainable utility preservation." This research offers a novel framework for regulating AI behavior to minimize unintended harm.[39] |

| 2019 | January 29 | Conference | At ACM FAT* 2019, Smitha Milli and Anca Dragan present research addressing the ethical implications of AI transparency and fairness. Their work highlights the importance of accountability in automated decision-making systems.[40] |

| 2019 | June 15 | Conference | At ICML 2019, CHAI researchers, including Rohin Shah, Pieter Abbeel, and Anca Dragan, present research on human-AI coordination and addressing biases in AI reward inference, advancing scalable solutions for alignment.[41] |

| 2019 | July 5 | Publication | CHAI releases an open-source imitation learning library developed by Steven Wang, Adam Gleave, and Sam Toyer. The library provides benchmarks for algorithms like GAIL and AIRL, advancing research in behavior modeling.[42] |

| 2019 | July 5 | Research Summary | Rohin Shah publishes an analysis of CHAI's work on human biases in reward inference. This summary offers key insights into how AI systems can align their decision-making with nuanced human behavior.[43] |

| 2019 | August 15 | Media Publication | Mark Nitzberg authors an article in WIRED advocating for an “FDA for algorithms,” calling for stricter regulatory oversight of AI development to enhance safety and transparency.[44] |

| 2019 | August 28 | Paper Submission | Thomas Krendl Gilbert submits The Passions and the Reward Functions: Rival Views of AI Safety? to FAT*2020. The paper explores philosophical perspectives on aligning AI reward systems with human emotions.[45] |

| 2019 | September 28 | Newsletter | Rohin Shah expands the AI Alignment Newsletter, transforming it into a vital resource for updates on the latest AI safety research, widely regarded as essential for researchers in the field.[46] |

| 2019 | November | Funding | Open Philanthropy recommends a $705,000 grant over two years to the Berkeley Existential Risk Initiative (BERI) to support its continued collaboration with the Center for Human-Compatible AI (CHAI). The funding provides resources for machine learning researchers at CHAI and bolsters initiatives focused on addressing long-term risks from advanced AI systems. By alleviating operational constraints and enabling targeted technical research, the grant strengthens CHAI's capacity to explore AI alignment challenges and develop "provably beneficial" AI systems, aligning with Open Philanthropy’s commitment to safeguarding humanity's future against existential risks. |

| 2020 | May 30 (submission), June 11 (date in paper) | Publication | The paper "AI Research Considerations for Human Existential Safety (ARCHES)" by Andrew Critch of the Center for Human-Compatible AI (CHAI) and David Krueger of the Montreal Institute for Learning Algorithms (MILA) is uploaded to the ArXiV.[48] MIRI's July 2020 newsletter calls it "a review of 29 AI (existential) safety research directions, each with an illustrative analogy, examples of current work and potential synergies between research directions, and discussion of ways the research approach might lower (or raise) existential risk."[49] Critch is interviewed about the paper on the AI Alignment Podcast released September 15.[50] |

| 2020 | June 1 | Workshop | CHAI holds its first virtual workshop in response to the COVID-19 pandemic. The event gathers 150 participants from the AI safety community, featuring discussions on reducing existential risks from advanced AI, fostering collaborations, and advancing research initiatives.[51] |

| 2020 | September 1 | Staff | CHAI welcomes six new PhD students: Yuxi Liu, Micah Carroll, Cassidy Laidlaw, Alex Gunning, Alyssa Dayan, and Jessy Lin. These students, advised by Principal Investigators, bring expertise in areas like mathematics, AI-human cooperation, and safety mechanisms, furthering CHAI’s research depth.[52] |

| 2020 | September 10 | Publication | CHAI PhD student Rachel Freedman publishes two papers at IJCAI-20 workshops. Choice Set Misspecification in Reward Inference investigates errors in robot reward inference, while Aligning with Heterogeneous Preferences for Kidney Exchange explores preference aggregation for optimizing kidney exchange programs, showcasing CHAI's practical AI safety applications.[53] |

| 2020 | October 10 | Publication | Brian Christian publishes The Alignment Problem: Machine Learning and Human Values, a comprehensive examination of AI safety challenges and advancements. The book highlights CHAI’s contributions to the field, including technical progress and ethical considerations.[54] |

| 2020 | October 21 | Workshop | CHAI hosts a virtual launch event for Brian Christian’s book The Alignment Problem. The event includes an interview with the author, moderated by journalist Nora Young, and an audience Q&A session, focusing on AI safety and ethical frameworks.[55] |

| 2020 | November 12 | Internship | CHAI opens applications for its 2021 research internship program, offering mentorship opportunities in AI safety research. Interns participate in seminars, workshops, and hands-on projects. Application deadlines are set for November 23 (early) and December 13 (final).[56] |

| 2020 | December | Funding | The Survival and Flourishing Fund (SFF) awards $247,000 to BERI to support its collaboration with the Center for Human-Compatible AI (CHAI). This funding enables BERI to provide operational and logistical support for CHAI’s AI alignment research, streamlining administrative processes and allowing CHAI researchers to focus on advancing key technical challenges in AI safety. The collaboration highlights a growing recognition of the importance of coordinated efforts to mitigate existential risks from advanced AI systems, with BERI acting as a bridge to facilitate smooth research operations. |

| 2020 | December 20 | Financial | The Survival and Flourishing Fund (SFF) donates $799,000 to CHAI and $247,000 to BERI, supporting their collaborative efforts in AI safety research. The funding bolsters initiatives aimed at improving humanity’s long-term survival prospects through existential risk mitigation.[58] |

| 2021 | January 6 | Podcast | Daniel Filan debuts the AI X-risk Research Podcast (AXRP). The podcast focuses on AI alignment, technical challenges, and existential risks, bringing insights from experts and researchers working on ensuring AI safety.[59] |

| 2021 | January 25 | Podcast | Michael Dennis appears on the TalkRL podcast, discussing reinforcement learning, AI safety, and the challenges in creating safe and reliable AI systems. Dennis addresses the complexities of reward design and behavior modeling to align AI with human objectives.[60] |

| 2021 | February 5 | Publication | Thomas Krendl Gilbert releases the paper "AI Development for the Public Interest: From Abstraction Traps to Sociotechnical Risks" at IEEE ISTAS20. The paper critiques limited abstraction in AI research and advocates for integrating social context and ethical considerations into AI systems development.[61] |

| 2021 | February 9 | Debate | Stuart Russell debates Melanie Mitchell on The Munk Debates. Russell discusses AI safety concerns, the urgency of AI alignment research, and the risks associated with unregulated AI development. He stresses the importance of international governance to control AI technologies safely.[62] |

| 2021 | March 18 | Conference | At AAAI 2021, CHAI faculty and affiliates present multiple papers focusing on AI alignment, safe reinforcement learning, and improving AI-human interactions. Contributions include research on scalable reward modeling and methods for better interpretability of AI behavior.[63] |

| 2021 | March 25 | Award | Brian Christian's book "The Alignment Problem" is recognized with the Excellence in Science Communication Award by Eric and Wendy Schmidt and the National Academies. The book explores AI alignment challenges and ethical dilemmas in designing AI systems that behave as intended.[64] |

| 2021 | April 11 | Workshop | Stuart Russell and Caroline Jeanmaire organize a virtual workshop titled "AI Economic Futures," in collaboration with the Global AI Council at the World Economic Forum. The series aims to discuss AI policy recommendations and their impact on future economic prosperity.[65] |

| 2021 | June 7-8 | Workshop | CHAI hosts its Fifth Annual Workshop, where researchers, students, and collaborators discuss advancements in AI safety, alignment, and research progress. The workshop addresses key challenges in AI reward modeling, interpretability, and scalable alignment techniques.[66] |

| 2021 | July 9 | Competition | CHAI researchers contribute to the launch of the NeurIPS MineRL BASALT Competition. The competition aims to promote research in imitation learning, focusing on AI systems learning from human demonstration within the open-world Minecraft environment to improve behavior modeling.[67] |

| 2021 | August 7 | Award | Stuart Russell is named an Officer of the Most Excellent Order of the British Empire (OBE) for his significant contributions to artificial intelligence research and AI safety. This recognition highlights his impact on AI ethics and governance.[68] |

| 2021 | October 26 | Internship | CHAI announces its 2022 AI safety research internship program, with applications due by November 13, 2021. The program offers 3-4 month mentorship opportunities to work on AI safety research projects, either in-person at UC Berkeley or remotely. The internship aims to provide experience in technical AI safety research for individuals with a background in mathematics, computer science, or related fields. The selection process includes a cover letter or research proposal, programming assessments, and interviews.[69] |

| 2022 | January 18 | Publication | Several papers were published by CHAI researchers. Tom Lenaerts and his co-authors explored "Voluntary safety commitments in AI development," suggesting that such commitments help escape over-regulation. Another paper, "Cross-Domain Imitation Learning via Optimal Transport," by Arnaud Fickinger, Stuart Russell, and others, discussed how to achieve cross-domain transfer in continuous control domains. Finally, Scott Emmons and his team published research on offline reinforcement learning, showing that simple design choices can improve empirical performance on RL benchmarks.[70] |

| 2022 | February | Funding | Open Philanthropy awards a $1,126,160 grant to the Berkeley Existential Risk Initiative (BERI) to enhance its collaboration with the Center for Human-Compatible AI (CHAI). The funding supports the creation of an in-house compute cluster at CHAI, a critical resource for accelerating large-scale machine learning experiments in AI alignment research. Additionally, the grant enables CHAI to hire a part-time system administrator, ensuring the smooth operation and maintenance of the new compute infrastructure. This investment reflects Open Philanthropy’s strategy to provide targeted resources for technical AI safety research, addressing computational bottlenecks that can hinder progress on solving existential risks. |

| 2022 | February 17 | Award | Stuart Russell joins the inaugural cohort of AI2050 fellows, an initiative launched by Schmidt Futures with $125 million in funding over five years. The goal of AI2050 is to address the challenges of AI development. Russell’s focus is on enhancing AI interpretability, provable safety, and performance through probabilistic programming.[72] |

| 2022 | May 2022 | Internal Review | CHAI releases a progress report detailing the growth, research outputs, and engagements from May 2022 to April 2023. This includes 32 papers on AI topics like assistance games, adversarial robustness, and social impacts. It also covers CHAI’s work on advising on AI regulation and policy. Additionally, CHAI's research on safe AI development in large language models and AI vulnerabilities is emphasized.[73] |

| 2022 | October 7-9 | Workshop | CHAI holds the NSF Convergence Accelerator Workshop on Provably Safe and Beneficial AI (PSBAI) to develop a research agenda for creating verifiable, well-founded AI systems. The workshop gathers 51 experts from diverse fields such as AI, ethics, and law, to ensure safe AI integration into society.[74] |

| 2022 | November 18 | Publication | A paper titled “Time-Efficient Reward Learning via Visually Assisted Cluster Ranking” was accepted at the Human-in-the-loop Learning (HILL) Workshop at NeurIPS 2022. Written by Micah Carroll, Anca Dragan, and collaborators, the paper addresses improving reward learning efficiency through the use of data visualization techniques, enabling humans to label clusters of data points simultaneously rather than individually, optimizing human feedback usage.[75] |

| 2022 | December 12 | Publication | CHAI researcher Justin Svegliato publishes a paper in the Artificial Intelligence Journal on competence-aware systems (CAS). CAS are designed to understand and reason about their own competence and adjust their level of autonomy based on interactions with human authority, optimizing autonomy in varying situations.[76] |

| 2022 | December 29 | Publication | CHAI researcher Tom Lenaerts co-authors a publication in Nature titled "Fast deliberation is related to unconditional behaviour in iterated Prisoners’ Dilemma experiments." The research investigates the relationship between cognitive effort and social value orientation, analyzing how response times in strategic situations reflect different social behaviors.[77] |

| 2023 | March 10 | Publication | CHAI begins contributing to discussions on AI takeover scenarios, focusing on maximizing objectives like productive output, leading to outer misalignment and potential existential risks. Research examines AI's impact on critical resources for humans and challenges in safely deploying transformative AI due to competitive pressures, emphasizing robust AI alignment and regulation.[78] |

| 2023 | June 16-18 | Workshop | CHAI hosts its 7th annual workshop at Asilomar Conference Grounds, Pacific Grove, California. Nearly 200 attendees participate in discussions, lightning talks, and group activities on AI safety and alignment research. Casual activities like beach walks and bonfires build community within the AI safety field.[79] |

| 2023 | September 22 | Workshop | CHAI holds a virtual sister workshop on the Ethical Design of AIs (EDAI) that complements the Provably Safe and Beneficial AI (PSBAI) workshop. The sessions focus on ethical principles, human-centered AI design, governance, and addressing domain-specific challenges in implementing ethical AI systems.[80] |

| 2023 | September 7 | Award | Stuart Russell, founder of CHAI and professor at UC Berkeley, is named one of TIME's 100 Most Influential People in AI. Recognized as a leading thinker in AI safety, Russell is acknowledged for his contributions to responsible AI development and advocacy for AI safety, including support for pausing large-scale AI experiments.[81] |

| 2023 | May 2023 | Award | Stuart Russell, Professor at UC Berkeley and founder of CHAI, receives the ACM’s AAAI Allen Newell Award for foundational contributions to AI. The award honors career achievements with a broad impact within computer science or across multiple disciplines. Russell is noted for his work, including the widely used textbook "Artificial Intelligence: A Modern Approach" and his focus on AI safety.[82] |

| 2023 | November 10 | Presentation | Jonathan Stray, CHAI Senior Scientist, presents a talk titled “Orienting AI Toward Peace” at Stanford's "Beyond Moderation: How We Can Use Technology to De-Escalate Political Conflict" conference. He proposes strategies for AI to avoid escalating political conflicts, including defining desirable conflicts, developing conflict indicators, and incorporating this feedback into AI objectives.[83] |

| 2024 | March 5 | Publication | CHAI researchers publish a study addressing challenges with partial observability in AI systems. The study explores issues related to AI misinterpreting human feedback under limited information, which can result in unintentional deception or overly compensatory actions by the AI. The research aims to improve AI's alignment with human interests through more refined feedback mechanisms.[84] |